In a past post, I outlined the different levels of user onboarding and encouraged practitioners to find more impactful applications of onboarding expertise. The hope is that we can move beyond instructing users about UI or getting them to sign up for an account (what I call interface orientation, representing the lowest level of onboarding) to building guidance that provides higher levels of value: helping people understand the value of products and services as they relate to creating meaning in their own lives, and helping them understand how their use of a product, service, or system impacts the larger societal system they are a part of.

In the era of AI, we have the opportunity to uplevel onboarding design, but there’s still a tendency for teams to use agents only for rote interface orientation. How might we leverage it for greater benefit?

Recently I came across an episode of Inner Cosmos, a podcast that looks at the relationship between our brain and our experiences. The episode, “What does alignment look like in a society of AIs?,” featured host and neuroscientist David Eagleman interviewing cognitive scientist Danielle Perszyk about her work with and vision for more personal, augmentative AI agents. This discussion articulated some clear opportunities for how agents built for “aligning minds” could support this path toward higher-level onboarding.

Perszyk and her team are working to develop personal AI agents that are modeled on, and understand, someone’s individual context. Her goal isn’t to create artificial general intelligence (AGI) as a replacement for the human mind, but to create agents that help people navigate the society they’re a part of. She views agents more as translators and mediators that support our social collaboration rather than as human equivalents. Here’s how I saw Perszyk’s ideas highlight 3 opportunities for personal agents that can transform the impact of the user onboarding design practice:

Shifting interface orientation off the user

What if a new user didn’t have to deal with navigating new, unfamiliar, or complex interfaces at all?

Even today with newer and smarter tools, most new users spend their time in software products trying to figure out how to get to the content and features they care about. And, product teams continue to waste new users’ time “educating” them about UI mechanics based on the team’s view of what users should be doing: where buttons are, how navigation works, what features exist, and endless pushes to sign up or sign in. This is often wasted effort, because users don’t want to waste time learning how someone else thinks they should be using software.

In her interview, Perszyk talked of this problem as observed in research conducted on email app users: “We mostly just stumble through [using software], and […] most people don’t have the time to keep up with all of the new things that you can do. And when they sit down to look at their email, they just want to, you know, send that email off, or just want to find that thing. And so they’re not deeply engaged with learning all of the things you can do.”

Designing an interface that is immediately understandable and usable to everyone is probably impossible. Modern interfaces need to serve too many different audiences, use cases, and contexts. Enterprise designers especially know this struggle is real.

This is where Perszyk’s idea of AI agents trained on a user’s context, which could include an understanding of their goals and their history with other interface paradigms, could help. A user’s personal agent could provide an interpretive layer on top of existing software to help someone get the right context they need for a task without always being beholden to screens.

Perszyk acknowledged that there’s still a time and a place for good interface design for the tools where the tool itself helps your work. She gives the example of creative software, “If you didn’t have all of the UIs and all of the drop downs, you probably wouldn’t even know where to start. Some of the tools are actually really helpful in scaffolding your understanding of what’s possible. Whereas other tools just distract so much from the actual goal.” But even for tools where a user wants to spend their time, a mediating AI agent could still help reinterpret the elements to better support the user’s goal. If the user’s goal is to jump in and create digital art, then, how can we get everything that doesn’t support that goal out of the way?

What might this mean for an onboarding practitioner? It means we could stop spending so much time trying to find one universal UI pattern that magically teaches every user how to navigate your product (impossible). It also means moving to a mindset that’s closer to that of API and services design: rather than defining the UI itself, you’re defining the UI parameters that an agent attached to a user can change for their user; you’re giving the agent a range of useful defaults that you know have worked for other users; and you’re crafting metadata an agent can consume to understand your intent for a product. You’re teaching the agent how to best negotiate the right experience between your product and the user it represents.

Augmenting valuable learning

When we can shift distracting interface orientation off of the user, then we can help people learn what they do find valuable.

Perszyk suggests an agent with a model of a user’s mind could help them learn complex subjects. She gives the example of learning quantum mechanics with the help of a personal context-aware agent: “Say I want to learn something totally new. Maybe it’s not just a software tool, maybe it’s something like quantum mechanics. And that’s really difficult to understand. You need analogies. But what are the right analogies that are going to work for me? Well, if it has a model of my mind, then it can, in a personalized way, help me come to the understanding that I need to get the big picture. And it can sort of follow that in a way, create a curriculum for me.”

Getting personalized analogies and a curriculum that stays in what Perszyk calls the “sweet spot” between frustration and achievement can help with learning so many things, and this could help our onboarding practice level up what kinds of things we end up helping users of products & services learn…which can then level up the value of those products and services in turn.

For example, imagine a platform designed to help someone sell prints of their digital art. Right now, these platforms use onboarding to teach people how to use the interface to upload images, manage inventory, and navigate orders. But a personal agent of Perszyk’s definition could simplify these mechanics and instead, in partnership with the platform, uplevel what the user learns to the skills and knowledge needed to make their art studio more successful. It could teach them how to improve their painting process to output more vibrant prints. It could guide them through how to propose and set up a gallery exhibition. It could translate concepts like ink pigment mixing or gallery lighting into hands-on activities that leverage the person’s physical environment to help them learn the right techniques (there’s a bit of a future for guided interaction).

This desire to uplevel a platform’s or product’s offering isn’t something new. Many designers have pushed to broaden their product’s offering to add value across multiple parts of the user journey. Historically this kind of product expansion has been limited by time and resources. But in a world of personal agents that plug into our services and generate tailored content and learning experiences, the possibility to broaden user learning and value becomes more real.

As onboarding practitioners, this means a shift to becoming better learners and better teachers. We have to leverage user research to understand effective learning and teaching strategies for all sorts of topics. We’ll need to teach agents how they can effectively combine good teaching strategies with the intent behind our products and services to broaden the end-user’s growth. And we’ll also need to teach agents to unlearn the ineffective user education patterns of today.

Aligning human communication

Finally, when it comes to providing more effective learning, learning alongside other humans is most powerful and most aligned to achieving higher levels of onboarding.

In Perszyk’s interview she mentions that the sophistication of our cognitive abilities increased, historically, as population densities increased. We get smarter through the friction of aligning our minds with others who think differently than us.

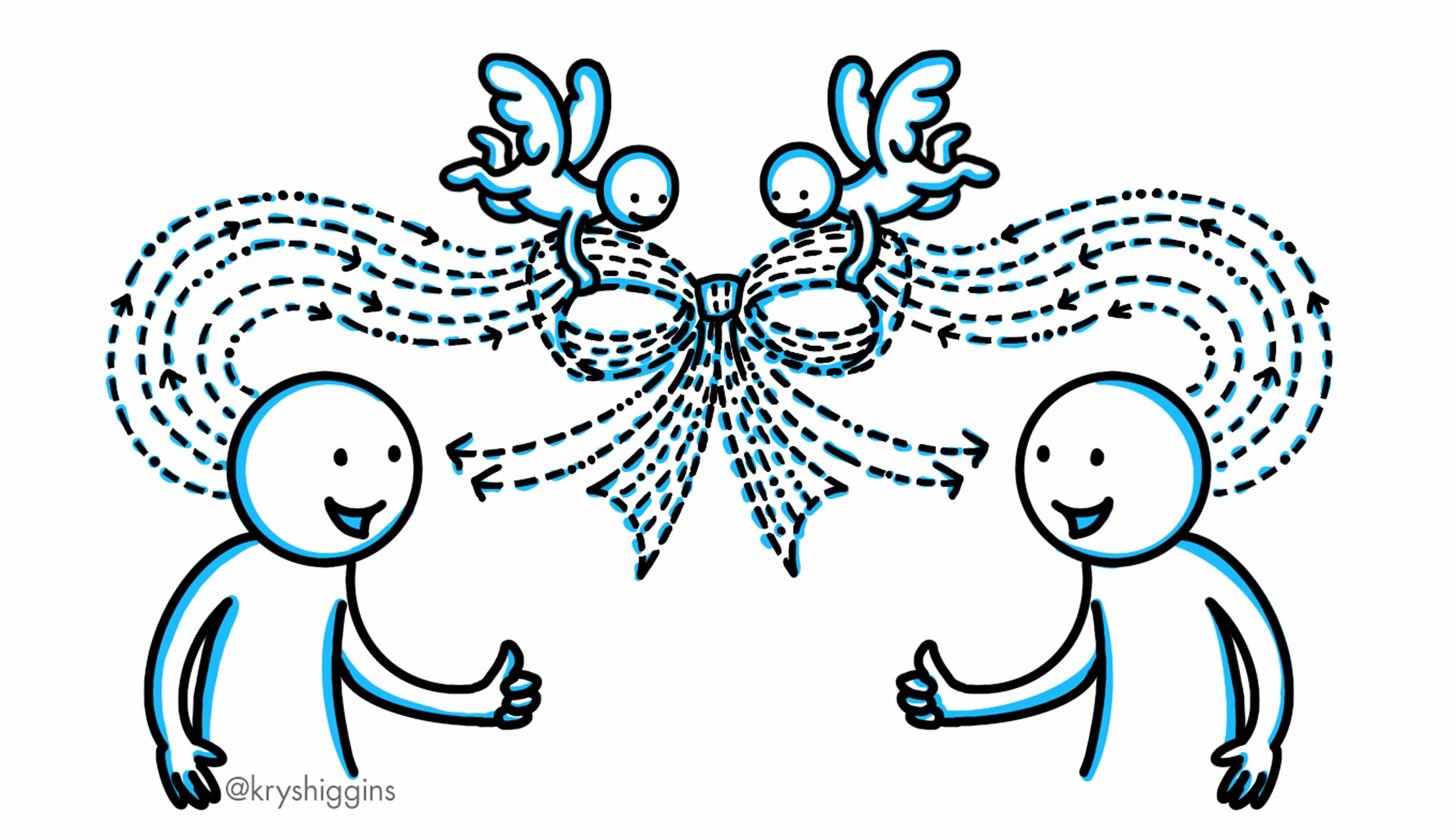

In Perszyk’s vision, agents can help facilitate that alignment by helping people understand each other’s contexts and giving them the tools we need to translate and negotiate differences. If each person has an agent that understands the model of their mind, and can communicate with an agent that has a model of someone else’s mind, then each person’s agent can “help us communicate at the right level of abstraction [and] save us time.” Alignment is not about achieving consensus in this model, but about removing the unnecessary friction of poor communication to achieve the useful friction of encountering differing perspectives that improves learning & outcomes.

From the user onboarding lens, the approach could further broaden a user’s experience and growth by helping them interface meaningfully with the communities around them in the context of what our products and services offer. Using the previous example of a platform for selling digital art, this might mean creating spaces for artists to give and receive critique, with agents helping translate feedback in meaningful, respectful, and actionable ways. The outcome could be a stronger and more engaged digital art community as a whole.

This is the higher level of onboarding I’d love to see. Instead of teaching someone how to click through a UI, we’re helping them connect with others, learn from them, and arrive at more positive outcomes that can influence a larger community. For onboarding practitioners, it means designing meaningful pathways through our products and services through which users can connect with other real humans. It also includes learning what it takes to be an effective and ethical translator, so we can best guide agents toward finding the right approach to translating differing points of view that balances the intent of our product or service with the goal of the user.

Moving forward

I appreciate the vision that Perszyk laid out in the podcast interview and how it inspired ideas for the future of onboarding. It may take a while to get to such an agentic future, so here’s some steps we can take in the meantime to uplevel our onboarding practice today:

- Shift to systems and services thinking: Consider how to design a good baseline for your product that doesn’t rely on educational tooltips, overlays, and “distracting” education. What defaults and examples can you establish that help users, and future agents, interpret the intent of your product? How can you start thinking about the parameters future agents might adapt?

- Learn about learning: Study what it takes to be an effective teacher, not just of software but of concepts.

- Unlearn poor user education patterns: By reducing our use of ineffective patterns today, we reduce how many of them will be used in training sets tomorrow.

- Create meaningful pathways for people to connect: This doesn’t mean a link to share on social media. It means finding relevant ways in your product for one user to relate to others, and learning about effective and ethical moderation techniques.

Onboarding design that focuses on reducing unnecessary distractions, helping people learn, and helping them align with others is going to be the way forward for the practice.

Further reading

In addition to checking out the Inner Cosmos podcast cited throughout this post, I also suggest checking out the work of various design experts and outlets who have been writing about the future of UX design in general. Here’s just a few links to get you started:

- MC Dean percolates

- Weiden Li (try Toward Human-Centred AI Research: A Framework for Evolving UX Research in the Age of Artificial Intelligence)

- State of UX in 2026 (Neilsen Norman Group)

I also wrote a book about user onboarding design called Better Onboarding. While it doesn’t focus on AI, it does provide strategies that go beyond interface orientation.