I’m a User Experience (UX) designer, and I happen to have a penchant for the user onboarding side of it. All UX professionals are onboarding designers, in a way. That’s because UX design involves closing the gap between systems, services, and the humans that use them through thoughtful design of virtual and physical interfaces. Good UX designers work hard to minimize how much time people have to spend on learning and using interfaces, trying to make them as intuitive as possible. This is no small feat given how every new user brings a unique set of mental models with them, and how limited current technologies are in providing perfectly individualized personalisation.

Now let’s shift to the neurotechnology industry; specifically, brain computer interfaces (BCIs). BCIs use sensors, which can range from non-invasive to invasive, to “measure brain activity, extract features from that activity, and convert those features into outputs that replace, restore, enhance, supplement, or improve human functions” (ScienceDirect). That definition can bring up ideas from sci-fi stories like The Matrix, Upload, or Altered Carbon, where characters can download expertise, control machines with their minds, and do other superhuman things.

But alongside these ideas, I worry that a question may also be forming in people’s minds: “Do we need the discipline of UX design to close the gap between humans and systems if we have direct-to-brain interfacing?”

I’d like to think so! And it certainly seems like the FDA does, at least based on my interpretation of their recently published guidance on using BCIs in patients. The guidance includes a section on human factors and how they need to be addressed to prevent hazards that “might result from aspects of the user interface design that cause the user to fail to adequately or correctly perceive, read, interpret, understand or act on information from the device.” That sounds pretty in line with the gap-closing goals of UX.

Yet, there doesn’t seem to be much talk about including UX professionals in the neurotechnology sector, despite lots of engineering job postings, increasing funding, and growing discourse about the potential consumer-level impacts of BCIs. Perhaps this is because most teams are still in early sensor design, or perhaps UX-related activities are being absorbed by other roles. But I also worry that it’s because UX is so often discussed in the context of web and app design that those working on other kinds of experiences can’t see UX’s relevance.

That’s a shame, because UX designers can offer a lot here. Good UX design is about the broader human factors that underlie all kinds of interfaces and systems designs. With BCIs potentially being the most personal interface, the right UX folks are needed to ensure it is scalable, understandable, accessible, inclusive, and responsible.

In the rest of this post, I seek to inspire neurotechnologists to see where they can involve UX professionals early in their development process. I start with a short list of the capabilities of BCIs based on existing research, followed by a few examples of how the right UX professionals could solve related problems. The examples won’t be exhaustive, but hopefully broad enough to give folks ideas and anchored enough to current problem spaces that it won’t be too much of a leap of the imagination to see UX’s relevancy.

Jump to:

To set expectations: I don’t work on BCIs or neurotech myself, although I believe I would like to one day. So, this post comes from my personal review of published BCI research; my experience working on the development of new technologies with other UX professionals; and from an impassioned hope that, if developed in the right way with important human factors considerations being driven by the right UX-minded folks, this technology will make it easier for all humans to onboard to new systems.

What BCIs can do

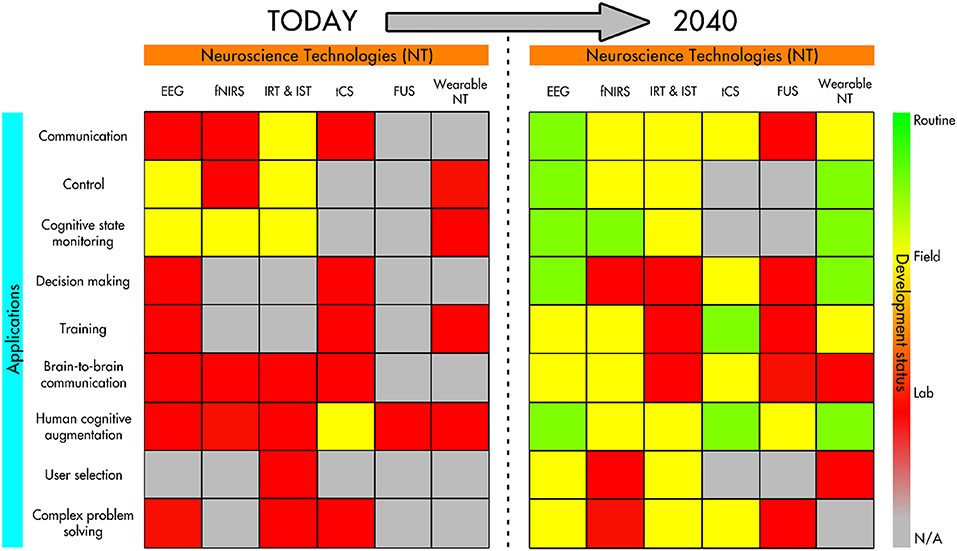

Before I share where I UX designers could plug into the world of BCI development, let me share what areas of application I used to draw my conclusions from. I adapted most of the following list from “Neurotechnologies for Human Cognitive Augmentation: Current State of the Art and Future Prospects” by Caterina Cinel, Davide Valeriani, and Riccardo Poli, which is a synthesis of BCI applications circa 2019. It also covers the designs of the sensors themselves and a review of ethical considerations. Where applicable, I’ve included links from newer sources.

Communication & control

BCIs can read signals from the brain and translate them into commands that can be used for communication. Paralyzed patients, for example, might use this technology to translate thoughts into digitized text, speech, or handwriting, or to control augmentative devices, like prosthetic limbs. There could also be brain-to-brain communication (Cinel et al. make mention of one example of brain-to-brain communication in mice).

Adapting interfaces

Instead of simply communicating with interfaces, BCIs could also adapt an interface in response to a person’s brain signals. Cinel et al. refer to a study done on air traffic controllers connected to a BCI, where signals from their nervous system were used to adapt the information in the system in real time to make it easier for the controller to cope.

Improved problem solving

This can range from allowing someone to perceive more information about a situation in order to make a more informed decision to change, or even by improving their decision-making capabilities directly.

Memory and training enhancement

BCIs may improve memory storage and retrieval. In addition, BCIs may help accelerate training for certain jobs by using rapid feedback loops that a person can respond to. As Cinel et al puts it, BCIs may make it “possible to meaningfully adapt the training to the users instead of using a more traditional one-size-fits-all approach.” Onboarding for many, anyone?

Group decision making/collaborative BCIs

Perhaps further out on the horizon is the use of collaborative BCIs (cBCIs). Cinel et al. mention “hyperscanning,” which describes when multiple people are connected via BCIs that facilitate group decisions. While in some cases the decisions appeared to yield higher quality outcomes than than those made by a single individual, Cinel et al. also mention that “group performance can depend on group composition, particularly similarity or familiarity between members.”

Ethical considerations

Cinel et al. also surface the following ethical considerations, which often go hand-in-hand with human-factors considerations:

- Privacy

- Agency (including individuality), responsibility, and liability

- Safety and invasiveness

- Societal impacts

There are certainly other possibilities, but this paints a picture we can use for the next section.

What UX design can do for BCIs

This section offers a few examples of how I believe UX professionals would help address the use cases and considerations from the previous section. If you’re someone who happens to be working in BCIs, perhaps this can help you think about how to staff your team.

Data visualization

Output from neural sensors is key to neurotechnologists making decisions about everything from the integrity of sensor’s design to the meaning of the signal. That means if the data is presented in a confusing or incorrect way, it could lead to bad decisions that impact humans downstream. UX professionals with interface, information, and visual design experience, as well as experience working on expert tools, can find the right way to present data to help neuroscientists make decisions.

Similarly, consumer-side data visualization may be needed in order to help people make decisions based on information output from a BCI. We already have UX designers working on data visualization for consumer healthcare, fitness, and other products, and many of the same heuristics apply.

Scaling clinical trials and pilots

Right now most BCIs are in small-scale clinical trials and pilot programs. These smaller scales have the luxury of research teams being able to provide frequent, hands-on support to participants to ensure their understanding of how to interface successfully with the technology. But eventually, clinical trials and pilot programs need to be able to scale to large, diverse participant pools to ensure the technology is widely useful.

Someone with a UX research, design, or content development background, particularly someone with service design or ecosystems design experience, can be helpful in:

- Developing an end-to-end study plan for a pilot or clinical trial, including identifying various systems and services participants will need to interact with along the way

- Designing recruiting campaigns, online tools, and processes that make it easier for different groups to participate in trials

- Creating onboarding and training materials

- Designing tools that make it easy for researchers and participants to record, share, view, and synthesize their observations

Designing multimodal and/or multi-device interactions

Some UX designers already design for multimodal interfaces today, which is where multiple types of input are used to control a single interface, or where multiple types of outputs are supported. One example is using voice input alongside touchscreen input. In the future, we may add BCIs into the mix to complement existing inputs/outputs, like AR and VR interfaces. BCIs themselves can even be multimodal, where different neural sensing technologies are used together (for example, EEG combined with eye tracking sensors). Finally, there can be many use cases where UX designers could help to design how BCI hardware is used in concert with other pieces of hardware, to make for intuitive physical interfaces.

UX designers, particularly interaction designers experienced with choreographing multimodal interfaces, hardware + software interfaces, or similar systems design, can help discover new combinations of modalities and choreograph these modes to work best together to offer people the best outcome in the control of the system.

Designing for comprehension and “BCI literacy”

Kübler and Muller-Putz (2007) explained BCI literacy as “someone’s ability to control a BCI system to an acceptable level,” and they noted that some people are better suited to adapt to these technologies than others. Others, like Margaret Thompson, have critiqued this kind of definition, saying that trying to assign BCI illiteracy as a trait of someone’s physiology or functional approach is a weak way to approach solving for it.

Good UX designers know that helping people achieve any kind of systems literacy requires a layered approach, including making sure we have realistic usage expectations and assumptions at the start; designing hardware and software to be usable and accessible at it’s core; and providing the tools and guidance people need to self-train and practice. The folks over at the GenderMag research project suggest that people approach learning new technologies in different ways (e.g., some people tinker whilst others prefer a holistic overview). Ultimately, we risk creating BCI illiteracy by over-optimizing BCIs for too-narrow usage expectations.

UX professionals can help improve BCI literacy and comprehension by:

- First, defining what an “acceptable level” of BCI literacy is so that it’s easily achievable and based on the right expectations and assumptions

- Adjusting the design of the BCI itself to reduce/remove the potential gap between any given user and the “acceptable level” of use.

- Then, designing ways to onboard people that do need to close the gap, and give them pathways to learn at their pace, like:

- Creating demos or customer support tools;

- Using multimodal interfaces as scaffolding;

- Designing calibration processes or tools;

- and more

UX professionals with experience designing complex software or hardware experiences, designing onboarding and setup/calibration experiences, creating help center content, or designing training software or services could be suited.

Designing for agency, control, and disclosure

When it comes to BCIs, there could be multiple layers of interpretation affecting agency. For example, an AI layer may be responsible for interpreting the meaning behind signals from neural sensors. Or, in the case of collaborative BCIs, multiple people could have influence over others.

Both of these examples show a need for deliberate design behind:

- How much control over the system a user has, how much control the system has, and how that’s disclosed

- How the user can interact with controls or give feedback to the system;

- How much responsibility the user has through the use of the technology, how they’re made aware of that responsibility, and if/how it’s enforced (see, for example, when NextDoor inserted checkpoints into a reporting flow to prevent racial profiling).

- Finding the right balance between necessary friction and unnecessary friction to support the above (if we don’t deliberately design this, we risk perpetuating patterns that are already frustrating to use today, like disclosure popups about website cookies)

UX professionals working on complex software applications, product settings, authentication architecture, or operating systems may have the necessary experience with disclosures and controls. Designers who have worked with AI/ML systems can be well suited; as resources like uxofai.com show, there are a number of similar considerations.

More to think about

This is by no means an exhaustive list of the applications for UX professionals in the world of BCIs. But I hope it inspires some ideas about how the skills could be beneficial, because UX design isn’t just limited to the design of screens on an app or website.

Does this mean that anyone holding a UX moniker could work in a BCI role? Unlikely. I’ve given examples in the previous section about what type of specific experience within UX might be necessary for different types of projects. It may be necessary for a UX designer to have experience or training in areas like neuroscience, medical design, public policy, ethics, and/or industrial design. This could depend on the makeup of a surrounding research team; e.g. if there are already medical experts on staff, a UX professional can partner with them closely. Those running BCI teams could consider provide explicit training programs as well, not just relying on expensive degrees, to be more inclusive when hiring: a diverse team leads to better design. I’m not the person who should decide on specific criteria, but I just want to acknowledge that there *are* notable considerations when staffing the people who will decide how BCIs interact with humans.

If you’re a UX professional hoping to learn a bit more about BCIs you could start with Cinel et al.’s 2019 synthesis, or check out A Beginner’s Guide to Brain-Computer Interface and Convolutional Neural Networks. And it can be helpful to get familiar with the philosophical-technical work of Andy Clarke to think broadly about UX’s potential future responsibilities. Finally, if you happen to be a UX professional already working with BCIs, I’d love to hear your thoughts!

This post represents my own views and does not necessarily represent the views or endorsement of any current employer(s).